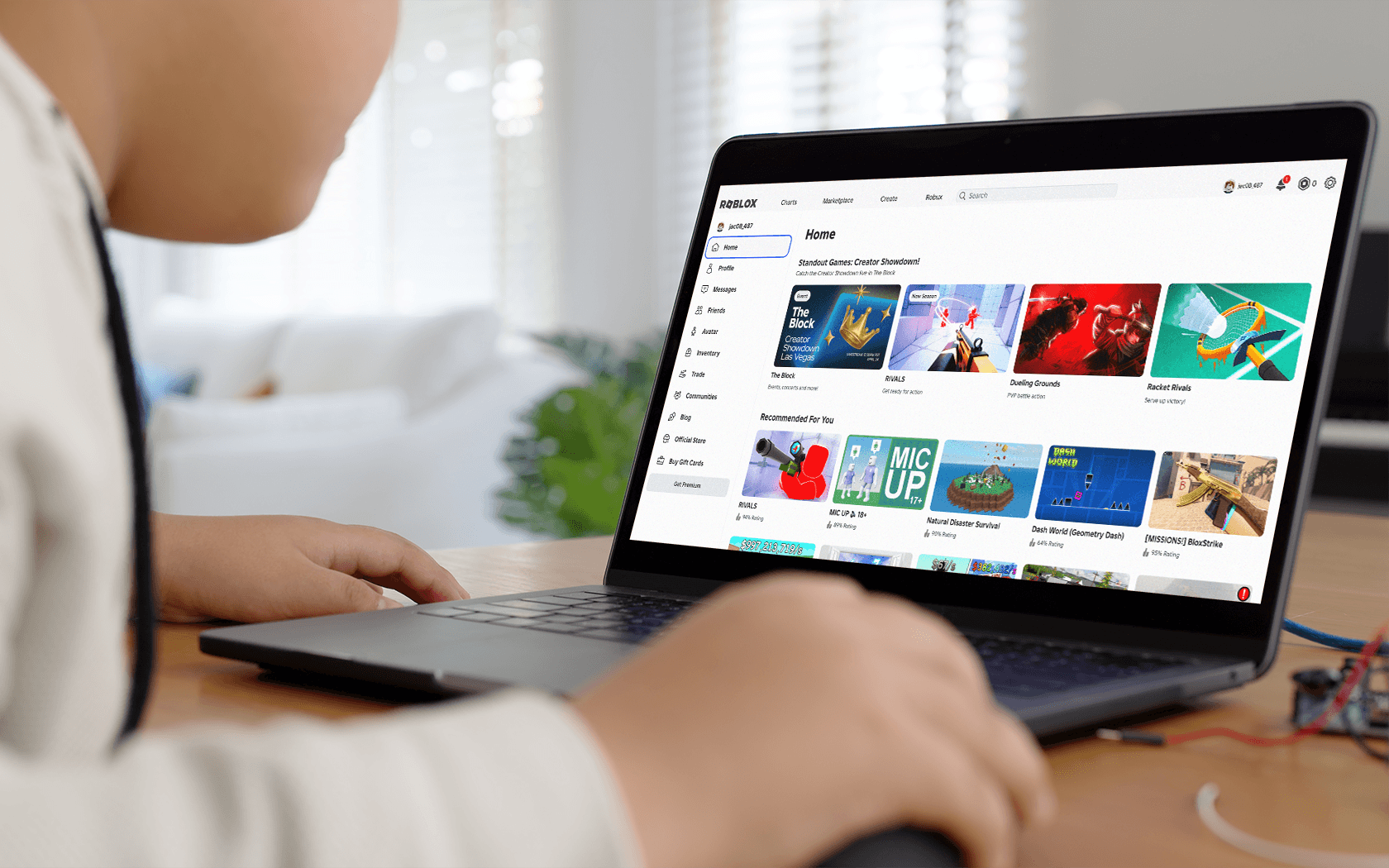

When generative AI tools like ChatGPT entered the mainstream, tech companies rushed to add similar features to their own platforms. Snapchat was one of the first social apps to do so, launching a built-in chatbot called My AI. Unlike a typical search tool, My AI lives directly inside Snapchat and looks like a contact your child can chat with.

For parents, that design choice raises important questions. What exactly is My AI? How does it work? And is it safe for kids who already spend time on Snapchat? Here’s what families should know.

{{subscribe-form}}

What is Snapchat My AI?

My AI is an AI-powered chatbot built into Snapchat. It’s designed to respond conversationally to user messages, answer questions and make suggestions. Visually, it appears alongside a user’s other chats and can be customized with a name and Bitmoji, making it feel more like a friend than a tool.

My AI was first introduced to Snapchat+ subscribers and later rolled out more broadly. Snapchat has since added more controls and guardrails, but the feature remains a permanent part of the app and cannot be fully removed.

Why did My AI raise concerns early on?

When My AI first launched, journalists and researchers tested its responses by posing as teens. Some of those early interactions were troubling. In a number of documented cases, the chatbot offered inappropriate or unsafe advice, including guidance related to substance use, sexual topics and avoiding parental restrictions.

Snapchat acknowledged these issues and has since updated My AI with additional safeguards. Many of the most extreme early responses are no longer reproducible in the same way. However, the initial incidents highlighted a core issue that still matters today: AI chatbots are not reliable judges of age, context or appropriateness.

Is Snapchat My AI safe for kids now?

Snapchat has made changes to My AI since its launch, including:

- Adding stronger content moderation

- Restricting certain types of responses

- Providing clearer warnings about its limitations

That said, My AI is not designed specifically for children, and it still carries meaningful risks for younger users.

Parents should understand a few key points:

1. AI can still give incorrect or misleading information

Like other AI chatbots, My AI can “hallucinate” and present false information confidently. Kids may not be able to tell when an answer is wrong or incomplete.

2. Age-appropriateness remains a challenge

Even with guardrails in place, AI systems can struggle with nuance, maturity and boundaries. Responses may still touch on topics that aren’t appropriate for younger users.

3. The design can blur emotional boundaries

Because My AI looks and behaves like a chat contact, some kids may treat it as a friend or trusted advisor rather than a tool. That can be confusing, especially for younger teens.

4. Data and privacy matter

Snapchat states that conversations with My AI may be stored and used to improve the feature. Users are warned not to share personal or sensitive information, but kids may not fully understand what that means in practice.

What should parents know about oversight?

Snapchat’s Family Center allows parents to see limited information about their child’s activity, including whether they’ve interacted with My AI. However, parents cannot see the content of those conversations, and controls are relatively light compared to some other platforms.

This means parental oversight relies heavily on conversation, trust, and boundaries at home.

How can parents approach My AI cautiously?

There’s no way to fully “kid-proof” My AI. For families with younger users, caution is warranted. Some practical steps include:

- Talking openly about what My AI is and what it isn’t

- Making it clear that it’s not a friend or authority

- Setting expectations about not sharing personal information

- Encouraging kids to check with a parent before acting on advice from the bot

- Reconsidering Snapchat access entirely for younger children

So, should kids use Snapchat My AI?

For younger kids, My AI is best avoided. For teens already using Snapchat, My AI adds another layer of complexity to an app that already raises safety and wellbeing questions.

While Snapchat has made improvements since My AI’s rocky launch, the feature still reflects the broader challenge of putting conversational AI inside social platforms used by kids. Until stronger, kid-specific safeguards exist, the safest approach is awareness, supervision and ongoing conversation.

Image Credit: tulcarion / Getty Images

{{messenger-cta}}